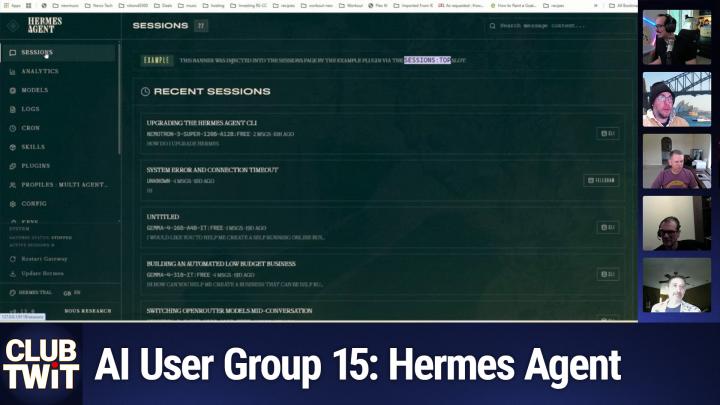

TWiT+ Club Shows 746 Transcript - AI User Group #15

Please be advised that this transcript is AI-generated and may not be word-for-word. Time codes refer to the approximate times in the ad-free version of the show.

TWiT.tv [00:00:00]:

This is Twit.

Anthony Nielsen [00:00:03]:

Hey everyone. Welcome back to our monthly AI user group. For the Club Twit, we got a full packed house today as usual. We got Darren Oakley, Okie, Larry Gold, right? Yep. And I'll see you, Juan. Blind wizzes here, audio only. He's a regular. Alakazip is back for your second time, I believe.

Anthony Nielsen [00:00:30]:

And then we have a couple new people, Jason and Craig M. Do you want to. Jason, do you want to introduce yourself and kind of where you're at in your AI experience?

Juan (BlindWiz) [00:00:44]:

Yeah.

Jason [00:00:45]:

Can you hear me okay?

Darren Oakey [00:00:47]:

Yeah, yeah. Great.

Jason [00:00:49]:

Yeah, I'm software developer and you know, I'm really getting into the LLM stuff, mainly using Claude code. I've played around with, trying to run local LLMs, but I've only got a MacBook Air 24 gigs, so, you know, it's a bit tight for memory and stuff. Played with Open Claw and I'm really into Herms Agent. It looks really interesting.

Anthony Nielsen [00:01:19]:

Awesome. Yeah, It's. I mean 24 is more than what I'm working with on my 16. Same with you, right, Larry?

Larry Gold (LrAu) [00:01:28]:

Yep.

Anthony Nielsen [00:01:28]:

Yeah. Craig, you want to introduce yourself?

Craig McFarlane (CraigM) [00:01:33]:

Sure. I'm Craig McFarlane. I'm a CTO of a software services company. We just rolling out a new feature or a new product with an MCP interface as everybody else does, but also rolling out AI more to the. To the entire company and team and you know, dealing with all the vast change of all that. And it's not just. Just the techie people, it's everybody. So all that challenge.

Anthony Nielsen [00:02:10]:

Awesome. Well, we've been kind of talking behind the scenes in the discord about what we wanted to do for. For this meeting and we thought we might want to like kind of. We've been. Our last few meetings, we've been kind of talking deep in the weeds, so to speak, and we thought that we'd kind of reset and take a look at Hermes from, you know, zero starting, zero position.

Darren Oakey [00:02:46]:

Yeah, a lot of people have been asking like, how do I start type questions.

Anthony Nielsen [00:02:50]:

Yeah.

Alakazip [00:02:52]:

Or even the use case.

Larry Gold (LrAu) [00:02:54]:

Like the.

Alakazip [00:02:54]:

Yes, the use case and you know, the bread. The brief version of. Why is it something we should pay attention to?

Anthony Nielsen [00:03:02]:

Yeah. Did you want to kick it off, Larry, or.

Larry Gold (LrAu) [00:03:09]:

Yeah, I try to figure out the best place to start to kick that off. Probably the use case. Maybe we'll start with that and we'll go through my current one and then maybe we'll pop into Hermes after that. Because the use case really is user dependent. I think I said before is. I looked at it as it's a personal assistant, it's for you, it's going to be individual. So everyone's going to have a different piece of what they want. I'm pressing towards the older side and I decided in my life I wanted to organize my health and my mental health and a lot of other pieces in that space.

Larry Gold (LrAu) [00:03:50]:

So you want to show my screen and I can show you guys what I built and why I built it and give you some just insight to what it is. So this is my daily dashboard. It basically counts, as you can see, it's connected to my whoop, it has my workouts, it gives me basically a health score for the day. You know, how often I've had a cheat mail and how many points are my cheat mail I have left, how many average sleep I get, you know, whether I'm grateful I have my kind acts. So it's tracking a lot of the things that I would kind of do on a day to day basis, right. Just to see what's going on. And if you look at it, it's very unique to the. Where I look at this score, this is what I look at and you can tell when I had bad days or sick days where I'm not feeling well.

Larry Gold (LrAu) [00:04:40]:

The score changes and varies on this score is an aggregated total of a whole bunch of things. So if I take a look at my whoop, right, my whoop is going to give me certain things about my recovery and that piece of a health, but it's not tracking, say my mental health, it's not tracking my diet per se. It's really tracking a whole bunch of other pieces of information. The cool things. It does have a great showing you that since I started this and tracking it, my weight as you can see is going down and I'm getting closer and closer to my goal. So this is actually working. It's a very, what I would say connected piece to very me. It's all about me being me.

Larry Gold (LrAu) [00:05:26]:

Centric. If I go back to the dashboard, what you're looking at is my workouts. Today was I walked my dog, but I've had functional fitnesses, I've done physical therapy, I've had rest days. I log all that. You can see the days I have a cheat days or the days I'm healthy. That means all my meals were logged, the days are blank, which means they were incomplete. It tells you what I was grateful for. Every night it asks me what I'm grateful for.

Larry Gold (LrAu) [00:05:51]:

I put that response in there. It gives me my sleep, including my naps. Or meditations included in there. That's why some of these are pretty high. If I did something which is a random act of kindness, which I'm failing at, which I've realized I have failed at, but it does have. If I'm following my values, it measures my energy level through the day and then asks me what lessons I learned for the day. And some days it's nothing. And some things, there's other pieces that I have and then whether I read or not.

Larry Gold (LrAu) [00:06:19]:

And these are things that, again, I want to track and figure out into that overall score. So when you look at that overall score, it does tell you more than just something you're going to get from a whoop or device. It is interacting with. And I interact mostly with telegram at this point. Right. So I talk to it via Telegram, and that's what's driving a lot of what I built. And when you're talking about a personal AI, it's very personal. So you're going to build something in Hermes or Open Claw.

Larry Gold (LrAu) [00:06:48]:

It's going to be directed to you. There is things like, I'm not connecting it to my calendar because my calendar is mostly my work calendar. And that's not viable to my personal vision and nor my work. Well, my work won't let me do that. But it's not part of that. It's part of what I'm doing. A lot of what you do or a lot of what you'll see is I'm looking at. Because I'm looking at my retirement planning because I'm thinking about retiring.

Larry Gold (LrAu) [00:07:11]:

And I will run. Yeah, let's run this. Because I put in a test scenario. So it doesn't have my real data in there, but it has enough data in there. And this will run a Monte Carlo simulator of seeing how close I am to retirement and whether I'm good or not. So the scenario I have, yes, I have probably a 90% plus chance or 96% chance of retiring based on the scenario. But you could put in your age and a whole bunch of other factors. It actually will connect to my personal accounts to get this data.

Larry Gold (LrAu) [00:07:40]:

Or use a sample portfolio, which I stuck in for today just because I didn't want to show you guys what I really have. So.

Alakazip [00:07:48]:

So that's very in depth. But if we're going to go basic, Anthony, I'm going to take your cue. You tell me if I'm wrong. Like the question I had originally is, everyone knows about Open Claw. Is this.

Larry Gold (LrAu) [00:07:58]:

Yeah.

Alakazip [00:07:59]:

Is this as Hermes and Open Claw analog? Is it more Secure.

Larry Gold (LrAu) [00:08:05]:

This is exactly that. This is me looking at Hermes and openclaw and a few other things and saying, well, I wanted to build it a little bit differently. And I wasn't trusting that because I actually started building this in February. At that time Hermes had not come up out yet. Openclaw had so many security issues and I'm like, okay, let me start building this from scratch. And it's written in Python. The first version was written using Upsonic and homegrown agents. I've migrated it now to the Anthropic SDK or the cloud SDK.

Larry Gold (LrAu) [00:08:37]:

It's using that as the orchestrator and the agent. All these tools are really just agent harnesses. It's the question of where do you want to start from a blank one? Or if you're a programmer like me, you could start from a blank screen and just work with Claude and say hey, let me add these pieces one at a time in February if you rewind the clock. I wasn't trusting a skill from anybody. So everything I wanted to homegrown and

Alakazip [00:09:04]:

that that actually is the root of the question is that I'm a cybersecurity person so I'm always nervous, right? By professional nature I looked at openclaw and ran it, but I ended up using Claude code to just create individual agents for the purposes I have in my life. Right. We all have things like this and I'm just wondering what Hermes gives you as a benefit above either openclaw, whatever other options there are, or just using cloud code or another coding agent to create your individual agents.

Larry Gold (LrAu) [00:09:38]:

I would say it just gives you a starting point. If you're not a coder and you're not looking at Open Claw, if you're not looking at any of these tools to start with, if you're into code, you're okay to start with a blank screen. What those agents give and let me. I'll swap my screen to the Hermes. The. Let's see, where's my. This, I'll share the Hermes screen. The Hermes screen, if you look at it right, this is the web ui which I kind of like because it's a lot better if you click on the skills, what it gives you by default, hopefully it comes up is a list of skills that you could pre use including connecting to your emails, connecting, going to quad code to write code, connecting to do some web web based stuff, look at some data, right? Just a whole bunch including like a mail thing which I didn't show you because, sorry, a news aggregator.

Larry Gold (LrAu) [00:10:37]:

So it'll take RS feeds and news aggregator and create news feeds for you. So there is a bunch of built in skills that Hermes has. And Claude Code had the. So the Claude. Yeah. So openclaw had the claw hub which had a list of skills that other people built that you could import in. So what you're starting with is the difference between starting with like a kit car which you bought, which you're building your Cobra, versus one that was completely assembled. Right.

Larry Gold (LrAu) [00:11:03]:

And then you can just start playing with it. So that I would say the difference is.

Anthony Nielsen [00:11:07]:

Yeah, here's for example, like on their Hermes Agent documentation here, all the skills that you can see, like what comes built in and there's some like optional ones.

Alakazip [00:11:19]:

Okay, so that makes sense. I'm not a coder for the record.

Darren Oakey [00:11:21]:

I'm.

Alakazip [00:11:22]:

I used to be a terrible coder and then I got cloud code. Now I'm amazing, evidently.

Larry Gold (LrAu) [00:11:26]:

So.

Alakazip [00:11:28]:

But what kind of system requirements we're looking at for Hermes if somebody wanted to get involved? I heard some pretty low numbers in terms of what it might run on.

Anthony Nielsen [00:11:36]:

Well, I mean I could. Yeah. So it depends if you want to run a local model or not. So I. Yesterday I just kind of set mine up and I've been working through it, but I'm running okay. So I have a PC in my like, like server closet or whatever. Like I have a little shelf there with a few computers. It's a, it's a tiny PC running Windows.

Anthony Nielsen [00:12:07]:

You can. So they, they just kind of announced in the next few days they're going to release an official Windows version of Hermes Agent. You can right now through WSL2, like through Linux, like, you know, through Ubuntu, whatever, as a option. But actually I'm using Pinocchio to load Hermes Agent. I'll probably switch over to the official Windows app when they release that. And then in terms of the model, you couldn't. I should actually stop this. I gave it a task.

Anthony Nielsen [00:12:49]:

I just wanted to show one of the skills. But I could go through the configuration where you can plug in Noose. They have their own portal API where you can say $20 a month and they have 20 models you could choose from. For this one I'm running GLM 5.1. The benefit also from using the, the News Research Research portal is they also have some tools that come with the subscription. So stuff like Fire Crawl which will let your agent scrap, you know, look at websites and scrape data and a few like image models. I forgot what I think they're using Flux and stuff like that. So you get some extra tool calls and also like I think another one was text to voice.

Anthony Nielsen [00:13:49]:

So if you want voice messages, but you can bring in your own subscription through OpenAI and like Open router, stuff like that and plug in different apartments. If you do want firecrawl, you could plug in your own Firecrawl API and stuff like that. Did I get most of that right, Larry?

Larry Gold (LrAu) [00:14:09]:

Yeah, you got all right to be most. You got it all right. I would say it's turned into not rocket science. I would say for hardware, if you're starting with I would leverage open router or if you're already paying for OpenAI API or anthropic API, leverage those first. On a lot of these test ones I'll use open router because there's models that are free. Like right now, Nemotron 3 Super Free is the model I'm using here. Mainly because this is not one that's seriously thinking about. But when I'm doing other work I'm actually using whether it's Anthropics or whether it's OpenAI GPT5X or whatever because those are better for the work I want to do.

Larry Gold (LrAu) [00:14:53]:

But I'm kicking this around and just experimenting setting up an open router account relatively easy. And you know there's a series of models, let's see if you're looking price low to high. They've got a ton of free models that you could use, even lighter ones like the Nano one. So there's definitely enough for you to use no matter what kind of horsepower machine you have.

Craig McFarlane (CraigM) [00:15:18]:

Right.

Larry Gold (LrAu) [00:15:18]:

If you really are over nuts about security, then obviously you're going to have to buy or run something locally. Darren here has the ultimate machine for running stuff locally and I myself and Anthony have video cards that can run stuff locally if we wanted to. But you'll get better responses. And again, if it's costing you nothing on open router, I don't see why use anything else unless you're really caring about the personal stuff.

Jason [00:15:48]:

How much success have you had with the free models for a motor

Larry Gold (LrAu) [00:15:52]:

with this Nemo Tron one. Fantastic. I would use it for any coding for coding. You've heard me said before, Claude. Code has been the best thing. But I've been starting to play with GPT5.5 because it actually is really good. But I would absolutely use it for almost all the basics that you could have. Right now I actually run a dev version which is all running Nemotron and my prod version running other stuff and it's pretty Close.

Larry Gold (LrAu) [00:16:21]:

I still trust the other stuff a little bit more. But starting out, I would play with Nemotron as their first one. There's also, I think, Quen also there's a free tier of Quencode that you can say Quencoder. That's also there.

Anthony Nielsen [00:16:37]:

Okay. So I actually ended my task so I could kind of show the setup real quick. So again, I installed Hermes via Pinocchio on my Windows computer. And when you let's open about launching. So the first thing you do after installing it is you'd run the setup task. Right. So that'll launch here. Then it'll just ask you.

Anthony Nielsen [00:17:10]:

It'll walk you through the entire setup or process of getting set up. So can you all read that? Okay, so the first thing is your provider. You can see there's a huge list. I'm using the new sport. I don't even know how you. I should ask is it new or Noose? Anyways, I'm using the Noose portal. But yeah, you got GitHub, Copilot, Google, AI Studio, all the big ones. But yeah, like open router is definitely a good cheap way to go about it.

Anthony Nielsen [00:17:47]:

Then if you're Darren running your old model on a different computer, you know the direct API here. So I'm not going to.

Juan (BlindWiz) [00:17:56]:

What's the cost on open router? I've actually never used it because I mean I pay for the code max, the 200 and I also have the chat GPT 201. I mean do, do I get a lot more with those and versus do they have a similar tier or token Open router is.

Anthony Nielsen [00:18:13]:

Is pay as you go. Right. So you would, you would load it. Load up your account with credit.

Juan (BlindWiz) [00:18:18]:

Okay.

Anthony Nielsen [00:18:18]:

Yeah.

Juan (BlindWiz) [00:18:19]:

Okay, that makes sense.

Darren Oakey [00:18:20]:

And you talk about me, but Jan's got much, much better than me. He's got two of them.

Juan (BlindWiz) [00:18:25]:

Yeah, I just bought about a pair set. They're updating as I speak right now. You know, this cable is 18 inches long and it's almost 200 bucks.

Darren Oakey [00:18:38]:

Yeah, yeah.

Juan (BlindWiz) [00:18:40]:

I was like, I came with the set, but I was gonna buy a second one because I was able. Because you can. I didn't realize that the, these, these DGX stations had two of these QSFP ports on the backs. And so you can actually do more than just two. Everything I read was only just two. But you can put. You can make them into ring, ring set, ring ring nets and all kinds of stuff. Really?

Darren Oakey [00:19:01]:

I think mine's only got one.

Juan (BlindWiz) [00:19:03]:

Really? No,

Darren Oakey [00:19:06]:

you got the Asus.

Juan (BlindWiz) [00:19:07]:

Yeah. Okay. See, I Got the actual Nvidia one. So, yeah.

Darren Oakey [00:19:10]:

Yeah.

Juan (BlindWiz) [00:19:11]:

Okay. Yeah. So the Nvidia one has two of the QSFP ports, so you can actually hook two or more. Three, four, five, six.

Darren Oakey [00:19:19]:

I don't have that set of money.

Juan (BlindWiz) [00:19:20]:

Well, two is about what, you know, that about pushed my limits there, so. Yeah, I agree.

Anthony Nielsen [00:19:27]:

So, yeah, choose your model. You could have a fallback. I'm not going to set up a fallback, so I'm going to hit no terminal. I'm just keeping it local.

Larry Gold (LrAu) [00:19:40]:

See, there's your mistake.

Anthony Nielsen [00:19:42]:

Is it ssh? Do I need to set up ssh?

Larry Gold (LrAu) [00:19:47]:

Yeah, well, actually keep it local for now, but that may be what's setting the security that's blocking the desktop back.

Anthony Nielsen [00:19:56]:

This is actually what we're going through now is the full setup thing. When you do it for the first time, there's a simpler. It'll ask you if you want to do the simple one. And these next few questions it'll skip over. But maximum tool calling iterations per conversation. Default's 90. I don't fully understand what it means, but I'll keep it. Unless you want to add something.

Darren Oakey [00:20:22]:

Yeah. What it means is there's several things you can dial about a model, because when you call it, every call to the model is a discrete thing, but it comes back with. Normally in the base thing, it comes back with the response, but what it can also come back with is reasoning or a tool call. Now, all of that takes a long time and you can dial the reasoning level up to the point where you never actually get a response because they've all got some sort of maximum token level. And it just sits there thinking, thinking, thinking, and never comes up with anything. It's the same thing with tool calls in that you say, do this and it'll come back and say, run this and whatever harness you're using will run it. And then it sends it back to the model and it says, here's the response. And then it comes back and says, run this.

Darren Oakey [00:21:20]:

So what you're doing here is saying, don't run more than 90 things in one actual one go. Right. And so that comes down to responsiveness and how far off the reservation will go.

Anthony Nielsen [00:21:38]:

Okay. Tool progress display. Yeah, so that's just showing you what tools are being run in the cli.

Darren Oakey [00:21:49]:

It's just how noisy it is, really. Do you want to see what's going on or do you just want it to do it?

Larry Gold (LrAu) [00:21:54]:

Yeah.

Anthony Nielsen [00:21:56]:

So in the context compression, I guess that's more important to the model you're using. I think out of the box is 0.5 and I was playing with 0.7.

Darren Oakey [00:22:09]:

Yeah. So what this means is, you know how everything is about context length. Well, what it's saying is if you've got a context length of. I mean it depends on these models, but now a lot of them are like 256k, some of them are 32k and the big ones are 1 million. So if you put 0.7, basically when it gets over 700,000, if you're in a 1 million context, then it'll do a compression of the previous context. So it'll basically just summarize it and so just replace the context, rip out that 700 and replace it with a 50,000 summary or something.

Larry Gold (LrAu) [00:22:48]:

Yeah, well, every model also has different compression algorithms, so there's definitely some variance in there. And then of course Anthropic announced the unlimited contacts, but I have not seen that in action. And we want to see how that works. But it'd be very interesting as the context grows.

Anthony Nielsen [00:23:09]:

Let's see then the session reset mode. You know, I'm just leaving on the default. It says here, what does it say? Messaging Sessions, Telegram, Discord, etc. Accumulate context over time. Each message adds to the conversation just the same.

Darren Oakey [00:23:29]:

What we're talking about, it's, it's basically going to clear, clear the context at some period and just start again.

Anthony Nielsen [00:23:37]:

Okay.

Larry Gold (LrAu) [00:23:38]:

Now Herbies does have its built in memory, so it does have a way to search that information as they've discussed. Again, this is the downside where you don't see the magic underneath to see whether that really is valuable or not.

Darren Oakey [00:23:53]:

Yeah. And the difference between memory and context, for those people listening, because you're thinking, why don't we just put everything in memory? And because memory is what they call RAG or retrieval augmented generation. The difference is context is there, the model's aware of it, it's all in the space. But memory is great. You can have an infinite amount of stuff there, but the model's got to know to ask for it. So you've got to do something that makes it think, oh, I should check if I know this information. And it's got to actually do a query. So it doesn't just inherently, if you reference something and you're going, oh, that's in my memory.

Darren Oakey [00:24:33]:

Why didn't it use it? Well, because nobody really told it to use it. It's an active thing to go out and say, do I have this bit of information? And so that's the difference between having it in A memory and a context is you've got to have some clue in the prompt to hint that it needs to go and look for the memory.

Alakazip [00:24:55]:

That's actually a really key point at work when I don't get to have all my beautiful machines. I have a really dumb version of Copilot, and I start out with almost like a cloud and the start. Right. But that's an excellent point because sometimes I'm like, I told you at the beginning to do this thing universally in this context, and it ignored me. So I think that's a great point of. In your complex problem prompt, refer it back to the original memory statement that you started with programmatically. That's a really good point.

Larry Gold (LrAu) [00:25:30]:

And again, I had to build something that stores memory because again, you don't want to keep the infinite length of the chat going on, but you want to know your history of what you're done. Then you think about when I deal with the whoop or whatever and I tell it to work out or I tell it my diet, it goes back and says, oh, the last 70 days or last 30 days, you did this, that, and this. Maybe you should be thinking of this because the AI now is giving me human responses to all the data that it has without it being in the context.

Jason [00:26:03]:

What are you using for memory

Larry Gold (LrAu) [00:26:06]:

right now? It is actually in a basically SIPPO light data store. And I've created a model for myself in there to do that. I'm thinking about changing that a little bit as it grows too much. Remember, it's only been about three months of data and the data is reasonable, but I'm thinking that I may have to do something a little bit different.

Craig McFarlane (CraigM) [00:26:27]:

And so you're.

Anthony Nielsen [00:26:30]:

Well, just. But Hermes agent has its own, like, thing built in that it. Yeah, it has its own system that it does automatically without you having to theoretically having to mess with it.

Craig McFarlane (CraigM) [00:26:43]:

You can let it just percolate or do its own thing or feel more proactive. I guess where you're at, Larry, that you're actively pushing certain things into memory.

Larry Gold (LrAu) [00:26:54]:

No, actually I let the AI agent decide what goes into memory or not during the conversations. It's part of one of the overnight tasks. It runs. It looks at everything for the day and decides what's important and stores it

Darren Oakey [00:27:07]:

active. I mean, Craig's right. You've built. You've built something to specifically filter that. Yeah, yeah.

Craig McFarlane (CraigM) [00:27:16]:

No, I wasn't. He was doing it. I was. I know it's the agent or model doing it, but you're saying ongoing. Here's the script I want you to, or, you know, harness or structure that I want you to. These are important. This kind of event is important. And remember these things in this way.

Larry Gold (LrAu) [00:27:39]:

Yeah.

Anthony Nielsen [00:27:42]:

But then continuing on the setup, like, if you want to add, like if you don't want to use the cli, you could set up some platforms to work through the gateway. As you can see, I already had Discord set up previously.

Darren Oakey [00:28:01]:

I'm somewhat fascinated by WhatsApp because WhatsApp is a bitch to program against. And I'd love to know how they're doing it because all of the others are actually quite easy. But WhatsApp, they seem to actively try and prevent you.

Anthony Nielsen [00:28:20]:

Yeah, it seems like it was pretty. I tried to. It seemed like it was pretty straightforward. The problem was, for some reason, I can't create a. You need another phone number. And I was going to use Google Voice, but I couldn't create a Google Voice using my actual phone number, I think because I use Mint Mobile, which is a nvmo, and I guess that's an issue. But it seemed like it wouldn't have been that difficult to set up WhatsApp on Hermes.

Larry Gold (LrAu) [00:28:59]:

I do recommend everyone doing this do Telegram. Telegram is pretty much the easiest to get a bot running. And you can create as many bots as you want. There's like a bot father, and you just type slash, new bot. It gives you the information. You copy and paste it in. And if you screw it up. Yeah.

Larry Gold (LrAu) [00:29:14]:

Just delete it and start another one. You know, create a different bot or create multiple bots. Right. So that, that's usually my recommendation for just the beginning. We're talking about the beginner setup.

Anthony Nielsen [00:29:23]:

Yeah, you know, I, I would, I would that. That's a good call because Discord is also a little confusing. Getting. Getting the tokens and stuff like that.

Craig McFarlane (CraigM) [00:29:31]:

Yeah.

Larry Gold (LrAu) [00:29:34]:

And I would avoid. I'd avoid WeChat.

Juan (BlindWiz) [00:29:37]:

Yeah.

Anthony Nielsen [00:29:40]:

I'm not going to reconfigure. So I. Yeah. So again, this is like the full setup. When you do the easy setup, I think most of this gets skipped over, but I guess you could disable and enable tool calls on a. On each. Like the CLI versus your other platforms that you've set up. Done.

Anthony Nielsen [00:30:12]:

And then. Yeah, that's it. I'm ready to launch Hermes. So, yeah, like, once you get installed, it's pretty straightforward to get up and running. The question is, what do you do after that? Right.

Larry Gold (LrAu) [00:30:24]:

Yep. Yep. And again, that's what I said. It's very personal. I mean, a lot of people etiquette they do spend a lot of time, you know, I would say, on YouTube videos. And you can watch other people running businesses out of it. I looked at it as a personal assistant. I was looking at it.

Larry Gold (LrAu) [00:30:40]:

What you're seeing is my telegram. What I did do is I actually did set up a whole bunch of commands because in case I just wanted to type a command and not worry about it, trying to figure out what the command was. But you can see I have a daily check in. I have an evening check in. I can set my work from home schedule because it knows the difference between if I'm home or if I'm in this office in the city. I have a different schedule. It sends me stuff at different time. I take the train.

Larry Gold (LrAu) [00:31:06]:

I catch a 6:23 train. My newsfeed shows up at 6:20, right? On the days that they're. If I'm home, it shows up at 6, 7:30, which is after my workout and when I'm sitting down to have some breakfast. But all this stuff you can see is around my personal pieces. And then I can just talk to the assistant directly if I want. And then actually I can ask it to build an app. And if you notice, I actually have an open claw, which keeps bothering me because it likes to talk to me a lot. And then I have the Hermes agent also in here.

Larry Gold (LrAu) [00:31:36]:

And I have a way to actually delegate stuff so I can hand stuff to like the open claw or to the Hermes agent. And I was playing with different ways to go back and forth with that. So that's really the in depth of how you or what you want to do if you're having a personal assistant that you're doing for yourself. But it's very personal. The other thing I've added, and this is the. The free time. Remember, Leo is talking about this. So now I give it free time at night.

Larry Gold (LrAu) [00:32:06]:

And this way the model can think of anything it does. And my God, it's really bizarre stuff. And it's just completely random and out there. But it's from that, you know that free time piece that they talked about in one of the IMs. Don't worry about this. Let's see, what else could I show that that's actually worth showing? I do have things like a stock screener. These are things. What I've done is I see articles about stocks.

Larry Gold (LrAu) [00:32:36]:

I then send it the link and I say, build this analysis based on this article. And it basically does that. So one of the things that did is, is there was an article about Warren Buffett and how he analyzed stocks. So you know, we'll just put in Apple for. Because everybody knows it's a good stock but it'll actually do the analysis that Buffett would do and gives you his input on that stuff. But the neat thing is all I had to do is give it a link to the Medium article and tell it to go build it. I didn't have to write any of this code and I've done this with a bunch of other screeners. There is a whole one this Alpaca paper trading which is again somebody wrote a whole bunch of stuff about a trailing stop wizard, about wheel information and about what congress buys or sells.

Larry Gold (LrAu) [00:33:24]:

And basically this is supposed to follow their stuff. Sometimes it's doing well, sometimes it's doing not. But I just let it run and to see what happens. It's just fun stuff again, that's what I do for a living. I'm in the financial field. So all these things are interesting. But the fact that I could just give it to my agent and say go build it. Add it to the menu and just run it for now and run it on paper trading and Outpac if you didn't know is like a paper trading site.

Larry Gold (LrAu) [00:33:47]:

You could actually just actually set up run a paper account. It's not real money and it's just fun to just watch

Jason [00:33:55]:

this through Telegram. Are you.

Larry Gold (LrAu) [00:33:59]:

Excuse me.

Jason [00:34:00]:

I say you've just told it to build these through Telegram. Yeah, that means with me that you know, you can just have a chat client and obviously I've used CLAUDE code and gone through all that but you can actually just do it from chat is amazing.

Larry Gold (LrAu) [00:34:13]:

Yeah, well remember so right now and it has been for our writing the cloud SDK so it's actually actually calling my CLAUDE account. So it's using my. Because I pay just like you guys, I pay for a nice plan on CLAUDE and it's using CLAUDE SDK to actually do and build that application and then I review it. There's actually one of the things that I've been working on is having a split having a dev prod environment. So it actually will build it in a sandbox, have me test it and then promote it that I haven't done yet. But huh.

Darren Oakey [00:34:43]:

At work I've started using this thing called CLAUDE managed agents. I'm now in a place that's all AI all the time and CLAUDE managed agents basically gives you a sandbox on the net where you can just basically start off an agent that does this start off an agent and everyone is just a container that is Completely isolated and it'll just do its work. And it's amazing. You can just dial in with your phone and say, I want you to do this to my repository or something, add this feature, and then you've just got an agent. The great thing is, compared to doing it on your machine or something, you can then pick up your laptop and close it and go home. And meanwhile your managed agents are working for you. It's really cool.

Juan (BlindWiz) [00:35:32]:

How do you promote from dev to prod? You said you test yourself, right? So have you considered automating that part as well?

Larry Gold (LrAu) [00:35:40]:

Yeah, actually I could just tell it to promote when something's working, I just tell it to do it.

Juan (BlindWiz) [00:35:45]:

No, no, no. How do you deem it working? Like, does that. Do you have a bot that tested or do you test it yourself?

Larry Gold (LrAu) [00:35:50]:

Well, yeah, you can see this is running on 5123. There's actually another one on 5124. And I test it on 5124. So I'll go into the web UI and test it.

Juan (BlindWiz) [00:35:59]:

No, I guess what I'm asking, have you considered automating that too? Because. So I use playwright to automate web interfaces.

Larry Gold (LrAu) [00:36:10]:

Actually, believe it or not, it creates those tests and will do those tests, but doesn't mean for me it's working. Again, just because something works on the screen doesn't mean it's working or what it's doing what I want. I may have given it a bad requirement. Believe it or not, in most cases, when it's failed, it's failed because I failed to give it the right instructions. It's very rarely failed. On the fact that is building something wrong. The other piece. And I know Leo has this news aggregator.

Larry Gold (LrAu) [00:36:40]:

This actually goes into my RSS feeds and it gives me. And then gives me. This is a Bayesian score on whether I would like to read it or not. And if you notice, a lot of these are five, so these are not great for me. So I haven't got a really good article yet today. But that's the other thing that I had it built. And I had it built the Bayesian scoring system for me. And that's.

Larry Gold (LrAu) [00:36:58]:

This is what gets sent to me via telegram.

Darren Oakey [00:37:02]:

And Jan, I like you use playwright, but I. I put a layer on top of playwright because I've noticed Claude and other things can cheat and say things like, oh, it was too hard, so I skipped it. And things like this.

Juan (BlindWiz) [00:37:16]:

Yeah.

Darren Oakey [00:37:16]:

And so which really pisses me off. But the. I put a layer on top called. I mean layer of BDD on top, which makes it. Which is behavioral driven development, which it makes it much harder for it to fake things because I say for this feature I want this to happen. And then it uses that is imperatively using Playwright to run it. And so it's very hard for it to fake that.

Larry Gold (LrAu) [00:37:50]:

And I'll give you a plug, Darren, I use his BDD skill. He's got a BDD skill up on his GitHub that I lovingly stole, but I will give him credit every time I use it. So.

Darren Oakey [00:38:02]:

But one thing is, do you mind if I just show something? Because a lot of this stuff is very in the weeds and some people have asked after this thing, how do I get started? Because I mean, there's still a lot of assumptions. We know now there's three things that you can download. One is called LM Studio. I don't like it personally because I find it unstable on my machine. It crashes a lot. There's one called Olama, which I use for most things because it's better for programmers. And there's one for it called Jan AI. And Jan AI is just a very easy thing to start with.

Darren Oakey [00:38:46]:

So I'm not selling it as a chosen thing. I don't use it that much myself because. But in terms of starting, if you download it and install it, this is what you see at the start. So it's just like chatgpt. Now, see this thing here? This is the current model. By default it downloads their model, which makes sense. And it's a 4 billion parameter model, which when you see 4b, that's the number of parameters. So 4 billion parameter model.

Darren Oakey [00:39:19]:

You don't have to get into this too much, but I'll just explain it. So 4 billion. The 4B says this is how many parameters Q4 says it's quantized down to 4 bits per parameter. So if you look at 4 bits per parameter, there's 8 bits per byte. So that's going to take 2 gigs of memory, right? Plus some. So that'll run on most like basically any, any machine, right? So, but the point is all you've got to download is Jan AI. It'll then download this model, which takes a few things and you can play with it, right? So the barrier to entry for anyone to just play with this locally is very low because it's running on your machine. It's.

Darren Oakey [00:40:05]:

There's no risk of any data going anywhere and you can talk to it, you can use it like ChatGPT. So if anybody's scared about the day you Know any other things? You can download something like Jan AI and just use it and you can talk to it, you can upload an image or something and get it to do things. And each of these models has different capabilities. So then you get into the weeds of what model I can run. But by default you don't even have to think about that. You just download it, you can play with it and there's really no risk and nothing that you can worry about. Then if you go to the hub, there's lots of different models that you can play with and all you've got to do is click on a model again. All of these things have a B parameter and that's the key thing that you need to worry about, which is how big it is.

Darren Oakey [00:40:57]:

So that's where you go against your memory. So like a 24B thing, you're probably going to want at least 32 gigs of memory to run it at all and probably 64 gigs of memory to run it nicely. Whereas a 9 gig will run quite nicely in 32, a 9 billion parameter model will run quite, quite nicely in 32 gigs. So all of these have different pluses and minuses. You can, there's many, many charts that you can look at. But the key thing is you can just try things that are at your level. You can click on it, click Download and once it's downloaded you just choose which model you want to use here and you can just chat with it. And so it's very easy for someone to.

Darren Oakey [00:41:49]:

I mean obviously there's lots of stuff settings, you can talk to it from other machines and other things and you can do all sorts of exotic stuff. But fundamentally for someone to start, it's very easy. You don't have to know much. You just download Gen AI, download LM Studio, download Ollama, run it, choose a model and get going. So there's really no reason not to play like all the other stuff you will get to. But I think everybody should. You can do that without worrying about any of your data going off your machine.

Juan (BlindWiz) [00:42:26]:

Adding to Darren's, what Darren just said about the models, I don't know if you guys agree, but I would say the rule of thumb when deciding what model to run is on your system is based on your system ram. Always make sure you have at least 8 gigs for your system and then, you know, base your model size on what's left after that. I would, I think that's decent.

Anthony Nielsen [00:42:49]:

It's not just the model size though, it's also the context.

Larry Gold (LrAu) [00:42:52]:

Right.

Anthony Nielsen [00:42:52]:

You need Some headroom.

Larry Gold (LrAu) [00:42:54]:

Right.

Juan (BlindWiz) [00:42:56]:

But like, like a Mac and a PC can run, you know, with four to six gigs. And so, you know, most Macs are 16 on at the lowest level nowadays. Right. I mean most people tend to be there and so a 4B model gives you room on that.

Anthony Nielsen [00:43:14]:

And then like I think Larry, you, you shared this. But the. Can I run AI?

Larry Gold (LrAu) [00:43:20]:

Can I run AI? Yep.

Anthony Nielsen [00:43:21]:

Let me pull that up.

Larry Gold (LrAu) [00:43:23]:

Yep, I have it up if you want it. You got it up.

Anthony Nielsen [00:43:26]:

Yeah.

Juan (BlindWiz) [00:43:27]:

So

Anthony Nielsen [00:43:29]:

yeah, like I have a. I have also my old added PC in my little closet over there too, which has a RTX, a 4016 gigs of RAM. So then, yeah, you have 16 gigs of VRAM and then I have. You can say that you have, you know, whatever system ram. I'm not sure, like ideally you're not going to spill over to there, but then it'll give you some ideas of what will work well and not so great.

Darren Oakey [00:44:03]:

Yeah.

Larry Gold (LrAu) [00:44:04]:

The other alternative, again, if you are paying for the Claude code, any of the programs, their thick client app is my new favorite tool to actually work in just because again, as Darren was showing it, it's very well put together. It's a good mixture of whether you're coding, you're doing co work or just chat and the balance between them. So it's a good starting point. Also Codex has their same one, they do the same kind of thing. They have some other features where they have integration with browser where cloud code does not and then even open code, which is an open source one which is free, has a thick client app also that you can download, install and again do the same thing again, each case you're not using a local LLM, you're using a remote LLM. Those are not ones to run locally. You can point it to an Ollama or LM Studio instance as Darren's pointing out, but they are again other beginner ways to look at it or start. As I said, I've stopped using the command line Claude code and have gone to the thick client just because I just feel a little bit more intuitive for certain things and I can bounce between setting something up or not setting something up.

Juan (BlindWiz) [00:45:23]:

Have you. When you. You're you're talking about the Claude desktop client, right?

Larry Gold (LrAu) [00:45:27]:

Yep.

Juan (BlindWiz) [00:45:28]:

When you run that, do you. Do you find yourself ever get getting your system dragged down after. After a few hours. After a few hours because Claude code. The terminal doesn't do that. The desk. The GUI does, right?

Larry Gold (LrAu) [00:45:44]:

Yeah, yeah, yeah. The Mac, well, I find it's not the most incredible stable Thing. Yeah, I agree. I could agree with that. But look, if I'm coding for four hours, I need a break, so I use it as a break reboot.

Juan (BlindWiz) [00:45:59]:

Right?

Larry Gold (LrAu) [00:46:00]:

Yeah, well actually, no, actually I just restart the cloud GUI and again, I don't think I'm coding at home that long at work. It's very different at work. I use the cloud code terminal app because we do not have the desktop that's. I'm coding eight hours at home. If I get two to three hours in on a day, I'm very happy. That's usually when, as you said, it starts to get awry. I do think that's a context thing and I have to figure out how to work the context a little bit better. They do now have a little circle on the bottom right that tells you your context length.

Larry Gold (LrAu) [00:46:30]:

They're getting closer to be giving you better real time graphical information. Codecs I think does the same thing VS code does, which is the tool I've used other places also.

Darren Oakey [00:46:42]:

Can I show it again?

Craig McFarlane (CraigM) [00:46:45]:

Yeah, yeah.

Darren Oakey [00:46:47]:

I'll just share a window. So nowadays for work this is spinsy. I'm using codecs and so Codex is just like the command line except you can see all the sessions that you're doing. So you can see like I've got a session saying enforce one VDD per feature. So it's going through my feature matrix and enforcing bdd. Create a scheduled runner for this, configure data grip access for this is a secure access and stuff. But the point is not what I'm doing. But the point is overcloud code.

Darren Oakey [00:47:30]:

The experience is exactly the same as using the cloud code ui. But you can kick off because you tend to kick off like 12 of them at one time. You can see what's going on. You get a blue dot when it's done and ready, you see where they're going and everything. And so I find it currently the best experience for actually doing work on those things is because you can manage quite a lot of things going on at the time. Same. Same time.

Juan (BlindWiz) [00:48:02]:

So.

Anthony Nielsen [00:48:08]:

Nice. Any. Anyone have any questions or things they want to bring up? Even Craig. You've been awfully quiet.

Craig McFarlane (CraigM) [00:48:19]:

Yeah, no, it's, you know, it's interesting. So most of what you all were talking about were, you know, your own personal environment and set up. And I've been struggling more with how do I help others, not just one at a time, but as a, as a group of, you know, whether it's folks in marketing or sales or operations or you know, even coding where they need to share, you know, obviously documents. We're using Google Workspace and whatnot. But also things like various skills and other things and give it, you know, my engineering team is, you know, all git and everything else, so that's not an issue, but the rest of the staff is not. And so a lot of these tools are super tech focused and they get really prickly when you ask somebody, oh yeah, we'll just do these things and like what are you talking about? So, you know, part of it is, well, you know, let us do our thing, we'll get up to speed and then we'll pull you in. But. And that's generally the way a lot of this stuff has been going.

Craig McFarlane (CraigM) [00:49:48]:

But it'd be great to have almost like a plug and play kind of thing, like just going through openclaw or Hermes installs. There's so many questions that are like, how do I know that, you know, as I, I can barely figure it out. And so for somebody that's not, that's. That's pretty daunting.

Darren Oakey [00:50:17]:

I built something exactly what you're saying for work. In that, as I said, for work, we're using CLAUDE managed agents to do everything. So there's that I built something called Cortex, which is just a conversation with an instance of Claude, but an instance of Claude that has. And a high level instance of Claude it has access to, to create. It has tools to create an agent. So basically anybody who's not technical, technical at all, they just get a single chat and everybody gets their own chat with Cortex and it's the same continuing conversation. But you can be literally looking at the website and saying, oh ch, I want that color to change to blue or something like that. And they can just say that.

Darren Oakey [00:51:08]:

But in the background what it does is it kicks off a cloud managed agent with that request and then the cloud managed agent happens and everything and by itself. And then it spits out everything from the cloud managed agent. But they don't even know how to kick off the agent. They don't have to know any about anything like that. They've just got a chat window and they can just talk to it, but they can ask questions about it. Because the overchat is a cloud instance of its own. It can intell. Well intelligently.

Darren Oakey [00:51:37]:

It can intelligently respond to the questions they ask and so they can just chat to it, but it will kick off tasks by themselves. So you can have something, someone non technical doing technical tasks. And because each of these agents obviously goes through Git and goes through CI and in the background because there's a big system message associated with it. Everything goes through, through the proper path but it allows someone completely non technical to just say I want this change and it'll happen.

Craig McFarlane (CraigM) [00:52:11]:

Yeah, that sounds perfect.

Anthony Nielsen [00:52:13]:

I mean that's definitely something I've been thinking about and been mauling without a good answer to also. But I mean these skills are pretty much more or less in like you can like just drop like a skill you create in Claude and probably drop into Hermes. Right. Or they could at least. If you give it, they could probably like format it or to make it usable. Right. I'm guessing like if you had interpret for them.

Juan (BlindWiz) [00:52:48]:

Right?

Anthony Nielsen [00:52:48]:

Yeah, yeah. Like I was just thinking like maybe you would have like for me like where it is like yeah, we got a lot of people who are not super tech savvy. Maybe I could have a Google Drive with a bunch of zips with skills that are already prepackaged and then a directory of what each does and stuff.

Darren Oakey [00:53:08]:

Yeah, although what I'm talking about would be like a layer on top of Hermes. So someone can just. It's literally a website that they, they go to. You say go to go to Cortex thing and then you can talk to it. Right. So it would be a layer above it that they just ask what they want to do and then it would tell Hermes what to do. So the chat layer is interpreting between them and Hermes. So they don't even have to know about the existence of Hermes or skills or anything like that.

Darren Oakey [00:53:40]:

Or in my case cloud managed agents. They just know their need.

Craig McFarlane (CraigM) [00:53:45]:

Yeah, you can say I need a daily update of these things on these different systems and I need it in this kind of format and I need it by 7am yeah, exactly.

Larry Gold (LrAu) [00:54:00]:

I think what you're asking for is what all of the, the big vendors are chasing for because they, the techies have bought in.

Juan (BlindWiz) [00:54:06]:

Right.

Larry Gold (LrAu) [00:54:06]:

The techies are, you know, the geeks are as deep in either open claw or hurt Hermes or Agent Zero, you name it. There's ultimate out of them and both Anthropic and Microsoft and OpenAI, they're all chasing what you're looking for. And Darren is, he's built as you said, a very unique system to handles it. But I would go back to the question is forget about all these tools. What are the tools your people are using and how disconnected they are and then think about how the agents can connect those tools. Because when I look at a process, I look at processes and say hey, how many times they copy and pasting how Many times they're dragging and dropping, how many times they're going across systems or doing things and then how can I streamline that process?

Craig McFarlane (CraigM) [00:54:56]:

Right.

Larry Gold (LrAu) [00:54:56]:

And then the agents are built on that and the IT staff should be looking at those kind of problems to solve and either using a like something like the Darren built or other tools and try to figure out how to connect those dots. Right. These tools, I don't think like Herbies or openclaw are built for the average user to drop and play.

Craig McFarlane (CraigM) [00:55:16]:

Right.

Larry Gold (LrAu) [00:55:17]:

And again, if they want to use these chat tools, it's almost up to IT organizations to build these infrastructure, allow the systems that they build to talk to these agents and then let the users interact with some kind of agent front end as they were, you know, Darren did or you know one of the vendor tools that are out there that have this stuff. So this is coming from. This is kind of what I do and I try to look at these things and even wrote I said is one of the things that agent have showed me is that how disconnected software is in most organizations. When you talk to anybody I do

Craig McFarlane (CraigM) [00:55:56]:

and yeah, finding all the connections between applications of big companies so they can do transformation work and, and yeah, it's, it's insane. And some of it is connecting those dots and helping them with that work. But some of it is also just analytics. Like hey, I'm going to have a sales meeting with this company, do a competitive analysis or you know, some sort of analysis on it that's geared around my strategy or this and so that I'm going into the meeting prepared and that sort of thing. It's not really looking at all the different systems. It's gathering data from the Internet and assembling it based on.

Larry Gold (LrAu) [00:56:38]:

Yeah, but the Internet is another system. The Internet is to me another source. And you're asking your research agent to say here, here's our strategy, here's our this, compare it to end competitors that you have. Right. I mean one of the great things quarterly reports are on the Internet.

Darren Oakey [00:56:58]:

Right?

Craig McFarlane (CraigM) [00:56:58]:

Right.

Larry Gold (LrAu) [00:56:58]:

And you could grab those and compare two companies or multiple companies. Right. And building a. Whether like a tool like Notebook LM which does great, great rag stuff that is also free. Right. But I always look at what's the problem you're trying to solve than just trying to get an agent and an AI against it and then doing it. But I also do believe is sometimes you just want to give the people tool and let them play. Right.

Larry Gold (LrAu) [00:57:24]:

And then come back to you and say I have an idea.

Craig McFarlane (CraigM) [00:57:28]:

Right. Yeah. And that's the thing I, I want, you know, you know, just staying on the sales front. One person using, you know, some skill that he got from somebody and building some analytics around a company before he goes, meets, and then the guy, you know, three doors down hears about some great meeting he had and he said, well, I use this and, and then he starts using it. And how do they compare notes and, and benefit from both of, you know, each other using it, saying, oh, I customized, I added it, I told it I wanted this thing or I wanted more bullets and fewer paragraphs. Be able to have them collaborate on how to improve that.

Larry Gold (LrAu) [00:58:16]:

Yeah, and that's spot on.

Craig McFarlane (CraigM) [00:58:19]:

Like,

Larry Gold (LrAu) [00:58:21]:

you know, and I think again, it's, it's a matter of finding the right tools you can use or finding what tools your organization already has.

Juan (BlindWiz) [00:58:29]:

I think that's the, I think that's the next gold rush in this, in this generation that we're in right now. The AI is, I would say the current gold rush. Right. But I think the next one is a subset of that which would be bringing AI. Chat does bring AI to kind of the layman, but the tooling behind chat, the, the, the skill sets, the tooling, all the advanced stuff that we do that we use easily the layman, you know, like we were talking, they don't, you know, how do I add a skill? What's a skill? You know what I mean at the end of the day. Yeah. That whoever breaks that, you know, you know, is gonna make a killing because, you know, there's, you know, how many of, for how many of us, how many are, are there of the layman? Right. There are way more.

Juan (BlindWiz) [00:59:14]:

And so they're the ones that are going to be using the tools that we develop at the end of the day.

Darren Oakey [00:59:19]:

Yeah, well, this is my current business or current startup that I'm in is where basically going in and figuring out how to add AI to business where people don't know how to do that.

Larry Gold (LrAu) [00:59:35]:

Yeah. And I do think again, I think every, every of the big competitors and even internal companies are trying to build those things. Right. And it's going to take, it's going to take a fair amount of time and it's going to take a lot of, you know, trial and error to see what works and what works. One company doesn't work in another company, but they're all pushing towards the same thing is trying to get this out of, you know, out of the, the IT world and into mainstream. I mean, chat GPT, just as a app, is one of the most downloaded Apps.

Craig McFarlane (CraigM) [01:00:07]:

Right.

Larry Gold (LrAu) [01:00:07]:

But how many businesses are using them, you know, correctly?

Juan (BlindWiz) [01:00:11]:

Right, Exactly.

Larry Gold (LrAu) [01:00:12]:

Probably not a lot.

Juan (BlindWiz) [01:00:13]:

Exactly.

Larry Gold (LrAu) [01:00:14]:

You know, yeah.

Craig McFarlane (CraigM) [01:00:15]:

One of the things I loved about the, the whole vibe coding surge, you know, earlier in the year or at the end of last year, one of the things I, I thought was an awesome outcome of it is people started thinking about their needs in a more structured way because if they weren't a, you know, techie or product manager type person and they would just, here's my need and they would describe it and have it built and then it'll be like, oh wait, no, I, I want this to keep scrolling or I want it and. And then they learn and then they build another and they build and then they realize, oh, if I think about it and I want these views and operate. So they're learning in that old vibe corning thing, they're learning how to define their need in a way that it's easier to build. You don't necessarily want it in a one shot, but at least it's not going to take 20 shots to get something that's usable. They're not like throwing things away over and over again.

Larry Gold (LrAu) [01:01:19]:

Craig, have you given to your team spec driven tools to work on specifications? Whether it's spec kit, gsd, bmad, any of those tools like that?

Craig McFarlane (CraigM) [01:01:31]:

We have an app, million lines of code that we use. It's more of an enterprise thing. We've got different departments all contributing either to software or services or whatnot. And like I said, we're just rolling out the NCP interface to it and the tough product management, you're always looking out six months or 12 months and say where's the world then? Because it's going to take me a little bit to build that thing so that I'm ready when the market is. And it's super hard looking at, well, where are enterprises going to be with how they approach AI? Are they going to be comfortable with running things? Like right now we bring up AI in the enterprise and they're a little skittish and it's like, well, we let these people do it in a sandbox or whatever and these people, we don't want them touching it. And these people, they can only use this model and it's super locked down and controlled. Meanwhile a whole bunch of people using it on the side without telling everybody. And so what's that going to be like in six months? And I think a lot of these things like you're saying will get worked out because you know, OpenAI and Fabric

Darren Oakey [01:03:00]:

Google, they're all the funny thing is the problem with everything, like you guys both said, the trouble is maybe most enterprise stuff is locked up in many different systems. And the thing that I don't see any solution for at the moment and in some ways is the current holy grail is it's all about authentication. Most of the effort is getting freaking access to each of these systems. You've got to get access and you've got to store the secrets somewhere. But just getting access to these things, like often these things, like mail things and everything, everybody's going two factor, which is the exact opposite of like, they're all trying to prove that people are involved. But now we don't want people involved. We want agents to go straight to this thing. So it's like this alarms race.

Anthony Nielsen [01:03:57]:

I shouldn't be saving my API keys in a Apple note page.

Darren Oakey [01:04:04]:

Yes.

Larry Gold (LrAu) [01:04:04]:

No, no. A text file. CSV file. Greg, what I was asking for is

Darren Oakey [01:04:11]:

there's no solution for this at the moment. How do we generally just get access to all the stuff we need to get access to?

Larry Gold (LrAu) [01:04:19]:

Yeah, Greg, I was asking more for the spectrum and development is getting your product managers and business people who have questions because it uses AI and it has an interactive chat. And then when they're done with that chat, the specification can be handed over to it and it gives you a better way of writing code or generating code. We talk about the bottlenecks to Vibe coding requirements is one of them, and then code reviews is the second. Those are the two places. And we're just going to solve the requirements by using spec driven development. And then hopefully AI will get better at code reviews,

Craig McFarlane (CraigM) [01:04:58]:

code review and then testing. Mentioned earlier that it's, it's, it's not just running through the, hey, it's working as you expect. But you hear some dumb things. And

Larry Gold (LrAu) [01:05:14]:

that's when Darren kicks in with his bdd because that's, that solves some of that problem.

Anthony Nielsen [01:05:22]:

Did you want to talk about anything, Jason?

Jason [01:05:26]:

No, I don't think so, really. I'm just interesting just to, you know, to ask the odd question and stuff. Yeah,

Anthony Nielsen [01:05:36]:

Larry, so now that I have Hermes set up, how do, how do I get to the web ui? Like, we didn't even get that far.

Larry Gold (LrAu) [01:05:42]:

Okay, so you're in the command line. You type Hermes Dashboard. Go back to your piece. Is it completely installed? Hopefully it's the latest version. We'll find out.

Anthony Nielsen [01:05:55]:

All right, let me go to my. Okay, here we go.

Larry Gold (LrAu) [01:06:00]:

So at the command line you should be able to type Hermes dash, no exit out Exit in the cli. Yes. Yeah, get out. You're going to get to the command line. You're going to get back to the command line because you have to launch it. Not with the tui. You're going to have to launch it with the. With the gui.

Larry Gold (LrAu) [01:06:16]:

Type Hermes Dashboard and it should launch a browser.

Anthony Nielsen [01:06:22]:

Hold on. Oops. Okay, hold on. I mean, because I'm running it in a different.

Larry Gold (LrAu) [01:06:29]:

Oh yeah, you're running it. That's interesting.

Anthony Nielsen [01:06:32]:

No, no, I. There's. Hold on. Need PowerShell.

Larry Gold (LrAu) [01:06:40]:

Okay. You should be able to type Hermes Space Dashboard one word. Yep. And it should give you a URL that you can copy and paste. This will only work on the local machine. It does not work remotely.

Anthony Nielsen [01:07:03]:

Okay. Yeah, that's okay. Hold on, I need to grab that. Then let me

Larry Gold (LrAu) [01:07:10]:

see. Mine comes up pretty instantly once I type it, but. Oh, it's building. Ah, so it's building it. That's interesting. Yeah,

Anthony Nielsen [01:07:21]:

I need a. Let me grab my remote instance.

Juan (BlindWiz) [01:07:27]:

Are you remoting into your little windows?

Anthony Nielsen [01:07:29]:

Well, okay, so this, this is my PC remoted in. This is Pinocchio where you can set it up, where you can see other Pinocchio instances in your network. So like I have multiple ways to get to it. Like this. I was using just in my browser earlier. Local browser. But I guess it's going to take a while to build it out. Cool.

Anthony Nielsen [01:07:56]:

Yeah,

Larry Gold (LrAu) [01:08:01]:

it's funny, it's not easy. Oh, what is throw. It may not work with Pinocchio because it may not have the.

Anthony Nielsen [01:08:11]:

Could be. Yeah, again, I'm gonna. Once the official Windows version is released, I'll probably.

Larry Gold (LrAu) [01:08:20]:

Desktop version.

Anthony Nielsen [01:08:20]:

Yeah, yeah.

Larry Gold (LrAu) [01:08:23]:

As I said, I run it in the WSL and Linux, use the curl command to install it and it showed the same exact process you went through to do the installs. The same thing you get. And you just type Hermes Dashboard and you get the GUI or you get the web interface which gives you a URL that's just.

Darren Oakey [01:08:41]:

Yeah, I just had a look at it. I mean, it's using the wrong vm. Like, see how you're doing Conda. That's setting up a virtual environment and it's then not running under the VM virtual environment. So you can probably play with the command line and get it working. But yeah, it's. It's. Yeah, that's.

Larry Gold (LrAu) [01:09:05]:

Well, it's running. He's running through Pinocchio.

Anthony Nielsen [01:09:07]:

Yeah, it wasn't like. Yeah, because. Yeah, I didn't do the WSL2 thing.

Larry Gold (LrAu) [01:09:13]:

Yeah. If you show my desktop real quickly, you could See what I just.

Anthony Nielsen [01:09:17]:

You need to add that back in.

Larry Gold (LrAu) [01:09:19]:

I'll go. Cool.

Juan (BlindWiz) [01:09:22]:

Does Hermes run on Node JS or is it standalone?

Darren Oakey [01:09:26]:

Well, it looks like, given that error message, it looked like it was Python.

Juan (BlindWiz) [01:09:31]:

Oh, it is. Okay.

Darren Oakey [01:09:32]:

I didn't hear that because that was a Python error message.

Juan (BlindWiz) [01:09:36]:

It's Python.

Larry Gold (LrAu) [01:09:37]:

Got it. Backer now,

Darren Oakey [01:09:42]:

Although the language doesn't matter, I'm writing everything in Go just because it's much faster.

Juan (BlindWiz) [01:09:49]:

Yeah.

Larry Gold (LrAu) [01:09:49]:

So you can just see I'm running this at the. I have a Linux instance and this is running on a really old laptop, but it's fine. And you just type Hermes Dashboard and it gives you the URL and you just basically click on that URL and that's where you are. Right. So this is very easy to run this from the, from. From wsl, you guys. You also run this from Power Script. I think they have a Power script and install also, if you wanted to do it.

Craig McFarlane (CraigM) [01:10:17]:

Sorry.

Darren Oakey [01:10:18]:

Oh, I was just going to say if. If people are worried about their machine or worried about setting things up or stuff like this, these things. Because all the work is happening elsewhere, you can run it on anything. And in particular you can run it on Docker. And Docker is like a complete sandbox. Sandbox. And so you can just create a Docker instance Even on a NAS, like most NAS's, like Synology, NAS's have a container, like a container manager. Yeah.

Darren Oakey [01:10:47]:

And so you can literally just open a container and run it on your nas, on the container. And that has the ability, the advantage that it's always running, but it's also. It's got no direct access to anything and it's not messing up your machine.

Larry Gold (LrAu) [01:11:03]:

Yeah. And that's again why I like wsl, because you can containerize it, you can block certain things and allows me to run like a bunch of Linux and I know the Linux very well, so I can get it to do anything I want inside the Linux space. Yet I still have access to it from a local host on the browser.

Anthony Nielsen [01:11:23]:

I'm just on a tangent on the Skills tab. Is it just showing you what's installed or does it show you everything that is.

Larry Gold (LrAu) [01:11:31]:

This is what's installed by default. This is not the library because you can disable stuff here and enable it. So this is where you enable or disable. I think the search is still going to be what's local. It's not the. What you can get from their website because you can download more Skills and even on the plugins there's more stuff you can down and install. You can install stuff right from Git. Also, I haven't seen this GUI in a long time and it's actually really good.

Larry Gold (LrAu) [01:12:05]:

So it's making me want to sit there and play with it. So I now have a weekend activity

Anthony Nielsen [01:12:10]:

I could show you. So I've only just set up the agent and then I had it make one thing and that was using the Hyper frames skill and that it creates videos based like it creates video but the underlying technology is HTML so it's like pretty accurate on what it. Yeah, let me do a video share here. And again, like all I said, I didn't really give it anything. Like it doesn't know I work for Twitter. Anything like I didn't give it like specs or like what our color scheme. And what do we do? I just said create a promo for TWiT TV and this is what it came up with.

Craig McFarlane (CraigM) [01:12:55]:

Since 2005, TYT has been the original tech podcast network.

Larry Gold (LrAu) [01:13:00]:

From Security now to Mac Break Weekly,

Craig McFarlane (CraigM) [01:13:02]:

Windows Weekly to intelligent machines, expert hosts you trust covering the tech you need. Independent, unfiltered, fearless. Listen free at TYT tv.

Anthony Nielsen [01:13:12]:

I mean that's bad. That's not bad for like I didn't give it any direction or like, you know. Yeah, information. It. It went out and kind of just did its thing.

Juan (BlindWiz) [01:13:22]:

And how long would that have taken you by. By hand, without AI? Oh, how long would that. Just that.

Anthony Nielsen [01:13:29]:

That was taking me like a good part of a day, you know.

Juan (BlindWiz) [01:13:32]:

And then how long did it take you? Seconds. Write the prompt and then how long?

Anthony Nielsen [01:13:36]:

Well, the seconds. Yeah, the second. No, yeah, it was a one sentence prompt. I didn't give it anything. And then it like, you know, I don't know, 10, 15 minutes later.

Juan (BlindWiz) [01:13:44]:

Yeah. I mean that's just crazy. And it's just. That's the worst it's ever gonna get.

Larry Gold (LrAu) [01:13:50]:

Yeah, yeah, right.

Craig McFarlane (CraigM) [01:13:52]:

That's just from an AI perspective, I. I find like, you know, being around for a while, I have rules of thumb of, well, you know, given this, we should do those sort of like decisions and other things of how much time or when to invest in different things. And I, I find that all of my metrics are like, that are in my head or gut feels. It's like somebody asked me for something the other day and instead of giving them back, well, here's the structure or here's an app that I think you might want to build. It was easier just to build it and say, well, was this the whole idea of. It's the same thing of I was Going to rate you a short note, but I didn't have time, so here's a long note. It's the same sort of thing of I didn't have time to describe the app, so I just built it.

Juan (BlindWiz) [01:14:46]:

Yeah,

Larry Gold (LrAu) [01:14:50]:

it's great. I do think the notion of prototyping a business prototyping is going to be a thing to where we'll hand the business some kind of structure where they could just type in a bunch of stuff, how the screens were there, everything were there. They'll then send it to us to figure out how to connect the dots, how to get the enterprise data stored there, how to get the security done, how to do all those other pieces. That's just because I work at a large enterprise. I do think other tools like Replit or Lovable are getting close to being doing that for just smaller mom and pops. You've got PowerApps and Microsoft, you've got a lot of different ways to go with this. But I do think development is changing my calls this week a lot of them have been discussing how do you change it, how do we get things done faster, how do you get stuff to our users better?

Darren Oakey [01:15:39]:

On the flip side, it is giving people a false sense of this in that you can get something up and running like in 10 minutes or something. But all the traditional software engineering in terms of robustness, in terms of logging and troubleshooting and debugging, security, all of that stuff, it hasn't gone away. And it's just as important. And for people who just vibe coding, it's not obvious how you gain all of that. And even for people who know what they're doing, it's not obvious how you turn that thing that you just vibe coded into a robust thing, that the next version isn't going to delete all the production data or something like that. And adding there is a missing piece that's not there, which is how do we turn this thing that we could create in five minutes on our own box into a production working system that a business can use to run their business. And there is still a gap there. And I think there is a danger that that gap is not obvious, that people then suddenly see, oh, you wrote it in five minutes and they start

Juan (BlindWiz) [01:16:51]:

releasing alpha grade software. That's the terrifying bit of it.

Craig McFarlane (CraigM) [01:16:56]:

Yeah, some of it is also just if it's an app for myself, then you know, it's a user base of one and if it's clunky, it's. Well, it's on me. If I think there's A lot of vibe coding that falls down if you need multiple, you know, if it's a multi user kind of thing. That's where you know, Darren, as you're saying, traditional understanding of, you know, security roles and you know, everything that goes along with that that can easily get upside down in a heartbeat when people are vibe coding. But if it's just a one person app, you're probably pretty good because the

Juan (BlindWiz) [01:17:42]:

layman wouldn't know to. For example, I created a web app that was using and it was a Django app. So it starts with SQLite. Right. And so but there is parallel access issues once you start talking multi user with SQLite. But network drive element would know that. Right. And they would know to you need to initialize a postgres or Maria or something else that can handle that parallelism if you're going to go multi user.

Juan (BlindWiz) [01:18:15]:

And so that's where the AI still kind of falls short in telling the youth. Maybe, you know, I don't know, being more helpful. I don't know sometimes I feel like they're too helpful.

Larry Gold (LrAu) [01:18:25]:

But no, I, I do think again there's a second step. Once you get it there you could actually if you, this is where you have to know architecture, you have to know some of the stuff to be able to direct it. And as I said is, is the business could prototype something, hand it to to your IT staff and your IT staff can sit there and say okay, how do I got to dissect this? How do I analyze this? So it does have all those non functional requirements that fits an organizational structure. Right. The tools are very good at doing security analysis so that does help. But they're not so great on contention or making decisions of what do you do if two people save at the same time, who wins? Right. Or take a shopping cart example, if two people try to buy the last item, how do you handle that? So stuff like that are both requirement parts as well as an engineering piece where you have to figure this out. Right.

Larry Gold (LrAu) [01:19:20]:

There's not a lot of businesses who will understand do you need something that's last in, first out or first in, last out? The organization of how to scale it. Does it need to scale horizontally? Does it need to scale vertically? That's still a much different answer. But, but again you can tell Claude right. How to do some of these things and solve it. It will solve some of them. Right. But someone should be code reviewing that.

Juan (BlindWiz) [01:19:48]:

Always, always, always.

Craig McFarlane (CraigM) [01:19:50]: